What is Big Data and can it advance process performance?

Mike Edwards

Features analytics big data data collection Near-Miss Management OPSS sensors video

As Julie Andrews sang in the movie “The Sound of Music,” the beginning is a very good place to start when it comes to big data.

By Deborah Grubbe

There are many different definitions of big data out there and that has led to confusion among many practitioners. For the purpose of this article, simple works best, and the definition used is straightforward and brief.

Big Data is nothing more than billions and billions of data points that have been collected over time. The time period can be over decades, years, months, hours or minutes. The frequency of the data collection would depend on what was being recorded, and could take the form of per month, per day, per hour, per minute, or per second or per fraction of a second. The data can be in an electronic format, or it can be in a written format in a laboratory notebook or an operator’s logbook. The current form of the data only matters in that it will cost more to take written data and make it digital. If all the data is already digital and is available to use, you are ready for the analytics. Once all the data is digitized, the analysis step comes next.The key difficulty with big data is how to effectively manage and segregate these large data volumes. Luckily, the recent developments in computational power and data storage has made big data analytics more economically available to more operations.

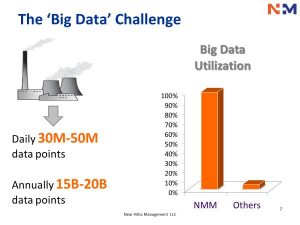

Source: Near-Miss Management.

Let’s look at the process and extraction industries, where complicated controls are the norm. Each operational unit is collecting a treasure trove of data every second of every day, and only a few firms are digging into the details of all of their data, analyzing it, converting it into actionable information, and then using that analysis to reduce risk, improve product quality or reduce cost. Your data could be your gold mine; however, you need tools to dig out those informational nuggets. Enter big data analytics.

The analyses associated with big data are complex and proprietary, yet once in place, these analyses can be used as a troubleshooting aide, as a support to maintenance excellence, as a way to improve process safety, and as a management tool for making more informed decisions. A few firms are using the data in all of these ways and are learning details about their hardware and their operational techniques that are new to them, even though the plants may have already been operating for decades.

Below are a three real life examples of Big Data Analyses and how the analytics exceeded the performance of existing operations and maintenance systems and gave the operations personnel additional insights in a time frame that allowed for continued operation within safe limits.

Example 1:

The maintenance engineer who noted the vibration irregularities (via the Big Data analysis, not via the standard maintenance system analysis) recognized that the entire process unit was operating at a higher load than usual and, if the compressor vibration problems continued, these symptoms could lead to a serious production problem or even a catastrophic outage. In particular, these anomalies for axial vibration variables (mainly associated with compressor and turbine) were indicative of conditions that forced the equipment to operate at significantly higher speeds than had been observed in the past. The engineer also noted that no alarms in the existing distributed control system were activated for the vibration variables during this period as they were set significantly far from the current operating range.

The Big Data Analytics in this instance made the operating team aware of these important issues early on. Their early discovery and investigation prompted them to explore ways to run the rotary equipment without sacrificing their reliability and asset longevity. Additionally, they were able to change out the bearings in an orderly manner without a significant production loss and without any serious damage to a high-speed reciprocating compressor that contained a hazardous material.

Play video: Near-Miss Management tools are designed to assist operations that want to engage in Big Data Analytics.

Example 2:

In a different process facility, there was a control loop where the flow was adjusted manually, and the associated valve movement occurred automatically. For two consecutive days, the big data analytics software was showing that the recycle flow had some irregularities from standard operation. The plant’s supervisors and operators were aware of this issue but had not brought it to the maintenance crew’s attention, partly because they were not aware of how serious the issue had become. The extent of the situation was identified, became known and was acted upon when the big data analytics software identified it as a high-risk item. Following these indications, the operating team submitted a maintenance work order for correction, and a serious upset was averted.

Example 3:

In yet another process unit, an operating team was using multiple sensors to monitor some important process variables, in this case a fluid level in a process vessel. There were three redundant sensors measuring this important level. The current control scheme was set up that if two of the three sensors registered out of range, the emergency shutdown system would be triggered.

The big data analytics software indicated that one of the sensors was “acting strangely,” but this was not observable via direct visual observation. Upon investigation, the operating team found an instrument fault with one of the sensors. They then informed the instrument mechanic to take the necessary corrective actions to fix the faulty sensor and to investigate and to address the factors that led to the fault. Their prompt action saved a potential unit shutdown, and it triggered some additional work around how to make the sensors more reliable. Given the high likelihood that the other two sensors could become similarly affected and faulty, the operations team recognized the importance of this early detection by the software. An even more important lesson was fact that the variability in the “sick sensor” was only available via the big data analytics mathematics and not directly observable by the operators.

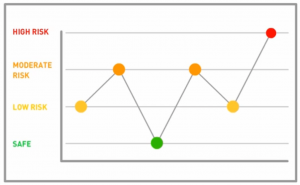

This chart is an example of how relative risk can change with time in an operating facility, based on analysis of billions of data points. Source: Near-Miss Management.

Consider how many process upsets could be avoided if attentive control room operators and maintenance mechanics had big data analytics at their disposal. Operations teams could get advance warning on “things that were just starting to go wrong” and then had enough time to assess and complete a fix before there was a serious upset or release. Their fast action would avoid the dangers associated with the potential loss of containment, would make the work safer and would eliminate and reduce many of the exposures and injuries that are seen in the industry today.

After the proprietary big data analytics, which is any product’s “secret sauce,” the user interface is perhaps the second most critical feature to consider, as it is what the majority of the users actually see every time they use the tool. This interface cannot possibly list all the data, but the underlying data must be available. The average user is normally interested in the “grossed-up” information, not the individual data points.

However, the data must be available through successive and progressive menus if the review indicates the need to examine additional detail. The user interface must be easy to read, and the “opportunities” must be visible and be quickly “brought forward.” It is also helpful if the identified issues can be triaged by the software, so that the team is addressing the most critical issues first.

A very effective use of the big data analytics tool is in the operations meeting that occurs at the beginning of every shift. Think of how differently the meeting would work if you had an insight into the current issues that were about to happen vs. the issues that occurred over the past few days and were under resolution. The maintenance and operations work changes could be significant! This subject will be discussed in more detail during the third and final part of this series.

Lastly, it is important, in today’s data security sensitive world, for the data to stay in your location, on your server and under your control. When investigating analytics software, inquire as to where the data will reside, and whether or not the data needs to be sent offsite for analysis. A safer bet is always to “keep your data” close, and your analyses even closer.

As has been discussed, big data analytics takes billions of data points and generates information and intelligence on how our facilities are operating. Good big data software is now able to give any operator a few days’ advance notice to a serious operational issue on the immediate horizon. Any operations manager will agree that when a number of alarms start going off, it will probably not be a good day. With big data analytics, the identification of an issue is made long before any alarms start to sound. In fact, if the alarm goes off, it just maybe too late…

This article is the first part of a 3-part series on Big Data and how the process and mining industries can use big data analytics to improve safety, improve operational efficiencies and reduce costs. The first part of the 3-part series above is an introduction to what is big data and what can big data do for you. The second article will focus on examples of effective big data applications in support of maintenance and operations, and the last article of the series will focus on the fact that you still need leadership when using a big data tool, and a big data implementation will most likely change your work process.

In Part II of the series, we will take a closer look at more examples of big data applications.

Deborah Grubbe, PE, CEng. NAC, is owner and president of Operations and Safety Solutions (OPSS), a global consultancy that works with various industries. Grubbe is a former member of the NASA Aerospace Safety Advisory Panel, and worked on the U. S. Chemical Weapons Stockpile Demilitarization. She serves on numerous advisory boards and is an Emeritus Member of the Center for Chemical Process Safety.

Print this page